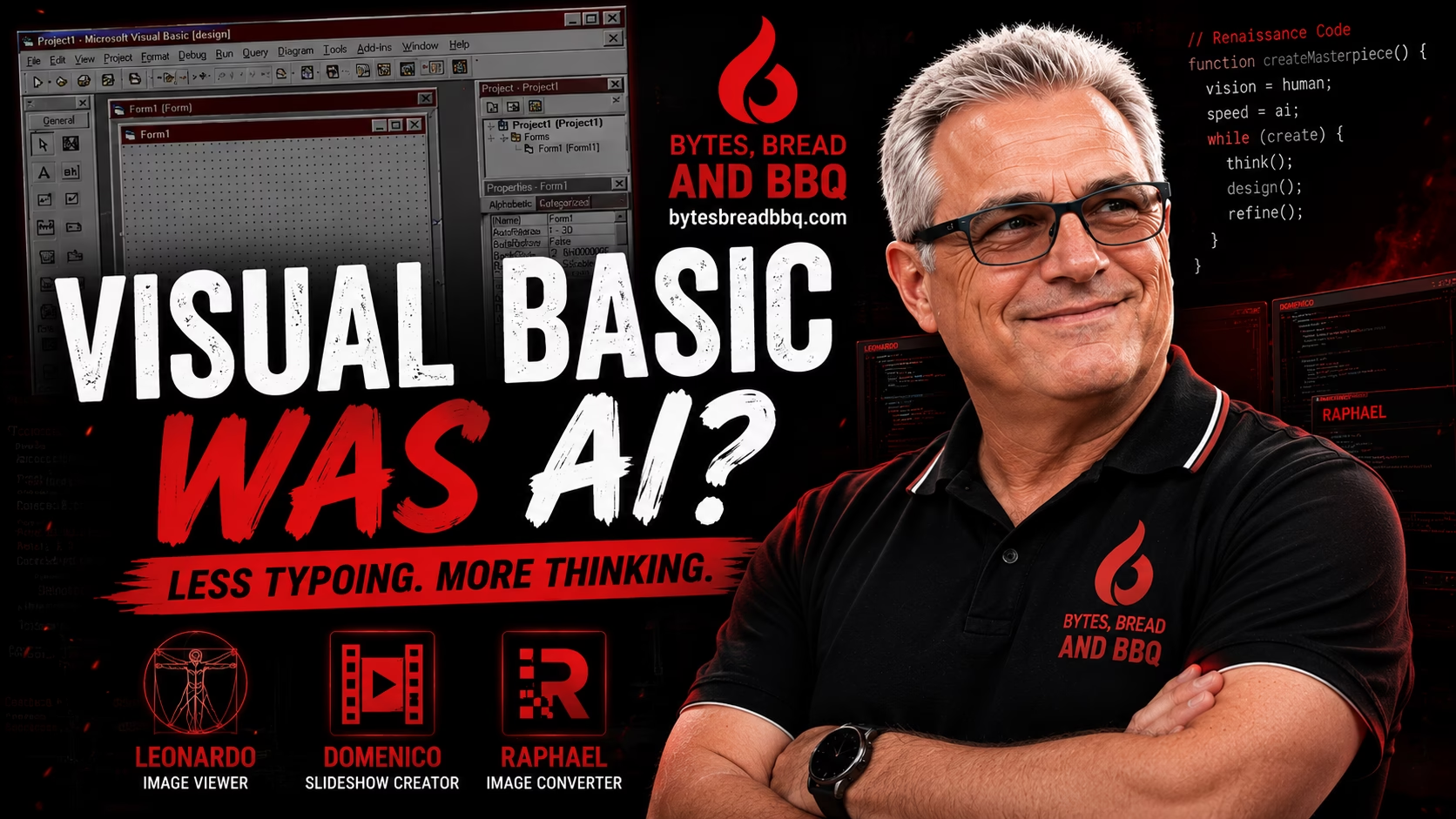

Or: How Programmers Have Been Letting Computers Do the Heavy Lifting Since Before AI Was Cool

What 1980s and 1990s programming taught me about modern AI-assisted development

There’s a debate raging right now in the software world:

“Is AI-generated code real programming?”

Depending on which corner of the internet you wander into, AI is either:

- the greatest software breakthrough since the compiler

- or the technological equivalent of putting ketchup on a perfectly smoked brisket

As somebody who has been programming since the 1980s… I think we’ve seen this movie before.

And honestly?

Some of today’s AI arguments sound exactly like the things programmers used to say about Visual Basic back in the 1990s.

Before Visual Basic, Everything Was Manual

When I started programming, software development looked very different.

I wrote text-based applications using tools like Clarion under DOS. There were no fancy GUI designers. No drag-and-drop windows. No visual layout editors.

Everything was typed manually.

Every screen.

Every menu.

Every field position.

Every coordinate.

Programming felt incredibly close to the machine.

Back then, if your screen layout looked terrible, there was nobody to blame except yourself and possibly caffeine deprivation.

If you wanted a button on the screen, you wrote the code.

If you wanted a database field displayed properly, you wrote the code.

If something was misaligned by two spaces… you fixed it manually.

Then something happened that changed software development forever.

Visual Basic Arrived… And Many Developers Hated It

When Microsoft Visual Basic appeared in the early 1990s, it felt revolutionary.

Suddenly developers could drag buttons onto forms instead of manually calculating screen positions like they were planning a moon landing.

Suddenly developers could:

- Draw windows with a mouse

- Drag buttons onto forms

- Resize interface elements visually

- Generate interface code automatically

- Focus more on application behavior instead of raw window creation

To younger developers today, that probably sounds completely normal.

Back then?

It was controversial.

A lot of programmers argued:

- “That’s not real programming.”

- “Real programmers type everything manually.”

- “GUI builders are cheating.”

- “Visual Basic developers aren’t serious developers.”

Meanwhile the Visual Basic developers were over there shipping applications while everybody else was still arguing about whether using a mouse violated the sacred programmer code.

Sound familiar?

Because that’s almost identical to the conversation happening around AI-assisted programming today.

Was Visual Basic an Early Form of AI-Assisted Development?

No, Visual Basic was not artificial intelligence in the modern sense.

But conceptually?

I think it represented an early shift toward higher-level intent-based programming.

Instead of manually coding every interface element, the programmer described what they wanted visually.

The tool handled the repetitive implementation details.

That idea should sound very familiar to modern developers using:

- ChatGPT

- Codex

- Cursor

- Claude

- GitHub Copilot

Today we increasingly describe functionality in human language.

Back then we described interfaces visually.

In both cases the programmer moved one level farther away from manually flipping bits over an imaginary campfire.

The abstraction layer changed.

But the direction stayed the same.

The Interesting Inversion

Here’s the part that fascinates me.

Visual Basic automated the interface.

The programmer still wrote most of the functionality.

Modern AI tools are almost the reverse.

Today:

- The developer designs the experience

- The AI helps generate functionality

- The human becomes architect, reviewer, and decision-maker

That doesn’t eliminate programming.

It changes the role of the programmer.

And honestly?

The software industry has been moving in this direction for decades.

We’ve Been Abstracting Complexity For Years

Think about the evolution of programming:

- Assembly language abstracted machine code

- Higher-level languages abstracted assembly

- Frameworks abstracted operating system details

- GUI builders abstracted window management

- ORMs abstracted database communication

- Cloud platforms abstracted infrastructure

- AI tools now abstract portions of implementation

Every generation of programmers eventually faces the same uncomfortable question:

“If the tool does more work… what exactly is my role now?”

This debate has existed for decades.

Assembly programmers rolled their eyes at higher-level languages.

C programmers mocked Visual Basic.

Old-school Linux users still occasionally glare suspiciously at GUI package managers.

And now developers are arguing over AI-generated code like somebody just suggested deep frying the Thanksgiving turkey indoors.

“If the tool does more work… what exactly is my role now?”

That question is not new.

What’s new is how fast the abstraction is accelerating.

Something Is Gained… And Something Is Lost

I don’t think the critics are completely wrong.

Every abstraction layer creates tradeoffs.

When programming moves farther away from the machine:

- productivity increases

- accessibility increases

- development speeds up

But sometimes:

- deep understanding decreases

- low-level knowledge fades

- developers become more dependent on tools

It’s a little like barbecue.

Pellet grills are incredibly convenient.

Offset smokers require more skill.

Both can produce great results.

But one definitely keeps you closer to the fire.

We saw that happen with Visual Basic.

And I think we’re seeing it again with AI-assisted programming.

My Own Experience With AI Programming

Recently I experimented with multiple development approaches across my own applications.

Some projects were heavily hand-coded.

Some used AI as an assistant.

One application was created almost entirely through AI-assisted workflows.

The results were fascinating.

Some AI-generated code felt surprisingly solid.

Some felt like it had been assembled at 2AM by a sleep-deprived intern fueled entirely by energy drinks and Stack Overflow snippets.

The AI-generated application worked.

It was logical.

It was productive.

But it also felt… different.

The architecture still mattered.

The design decisions still mattered.

The judgment still mattered.

The typing mattered less.

The thinking mattered more.

Which honestly might terrify programmers who still judge coding ability by how aggressively somebody attacks a mechanical keyboard.

Good Developers Still Matter

I don’t believe AI replaces programmers.

And honestly?

I don’t think Visual Basic replaced programmers either.

The tools evolve.

The abstractions evolve.

The workflows evolve.

But software still needs:

- human judgment

- architecture

- creativity

- problem solving

- taste

- experience

That part remains deeply human.

Maybe the real lesson from the Visual Basic era is this:

The developers who survive technological shifts are usually the ones who adapt instead of arguing that the old way was the only “real” way.

Because history shows something pretty consistently:

The programmers who spend all their time yelling at new tools usually get replaced by the programmers quietly learning how to use them.

And after watching programming evolve for decades…

I suspect AI-assisted development is simply the next chapter in a story that has been unfolding for a very long time.

Final Thoughts

If you programmed during the Visual Basic era, I’d genuinely love to hear your perspective.

Did GUI builders feel controversial when they first appeared?

And for younger developers:

Does AI-assisted programming feel like a natural evolution of software development… or something fundamentally different?

The discussion itself might be one of the most interesting parts of this entire transition.

I created a little YouTube Video on the topic if you want to see more: Visual Basic Was AI?